Microsoft’s New ‘Singularity’ AI System Might Have 100,000+ GPUs

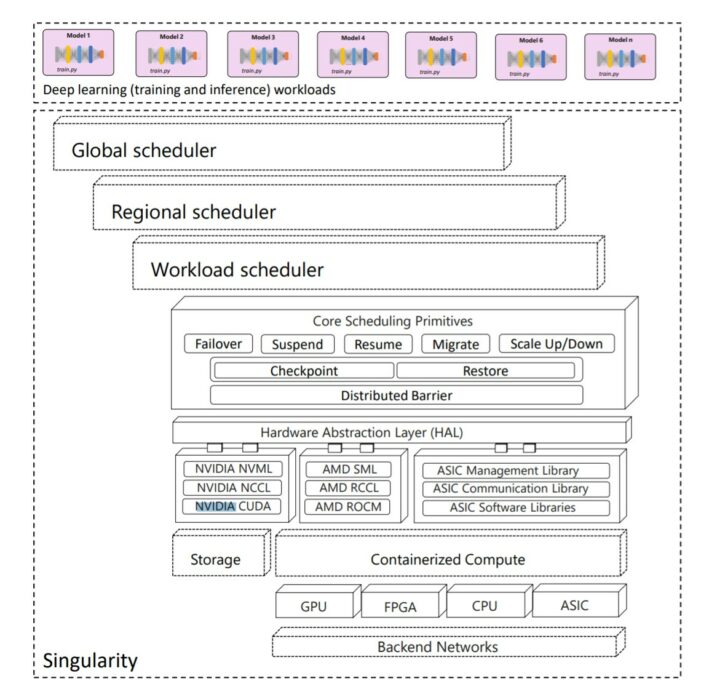

Microsoft’s Azure and Research teams are working on a new AI infrastructure, which they’re subtly calling Singularity. According to the company, Singularity is an AI scheduling system that is a brand-new “planet-scale” project.

As Microsoft’s published technical paper explains, Singularity’s focus is to help Microsoft manage costs by driving high utilization of deep learning workloads. In other words, it ensures that it utilizes Microsoft’s global network of hardware while cutting costs from running AI workloads.

Singularity can easily resize jobs and shift them to different infrastructures worldwide. According to the paper, someone can pause a live job, relocate, and continue precisely where they left off. Any project associated with the new infrastructure can scale up or down according to requirements. This flexibility in AI workloads optimizes capacity usage and saves Microsoft time and money.

The technical paper thoroughly explains details about the scheduler itself instead of the entire infrastructure. Likewise, it describes a single system test run on a server with eight V100 model GPUs. If there’s a fleet of servers, the Singularity project has the backing of more than a hundred thousand GPUs. Microsoft has roughly tens of thousands of such servers with eight GPUs each.

Singularity achieves a significant breakthrough in scheduling deep learning workloads, converting niche features such as elasticity into mainstream, always-on features that the scheduler can rely on for implementing stringent SLAs,” the paper reads.

There’s no evidence highlighting if this new infrastructure will ever see the light of day. If it does, it’s only good news for the company.