How Facebook Let Advertisers Target ‘White Genocide’ Enthusiasts

People in the U.S. are still reeling from the horrific Pittsburgh synagogue shooting incident, yet Facebook let another racist ad slip into its advertising platform.

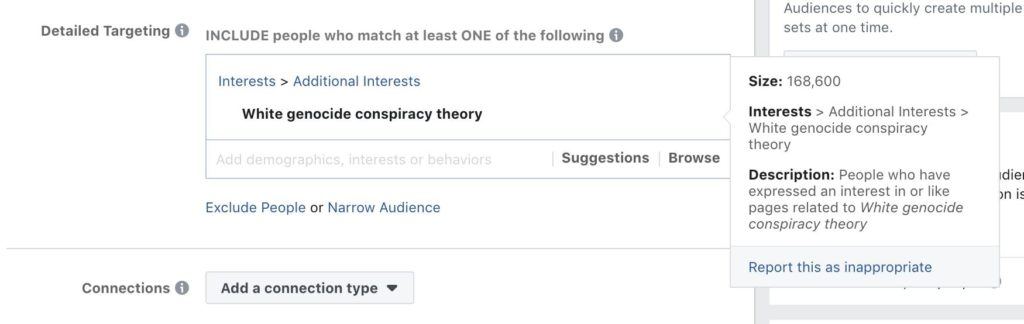

As reported by The Intercept, Facebook allowed marketers to select a target audience of people interested in the “white genocide” conspiracy theory.

They ran a series of tests on Facebook’s automated platform and found that Facebook’s system had identified such a category on its own. The company offered this racism based category among its predefined suggestions for advertisers.

On selecting it, Facebook would serve an ad to nearly 168,000 users that belonged to the category. These are people who have expressed an interest in or liked pages related to “white genocide conspiracy theory.”

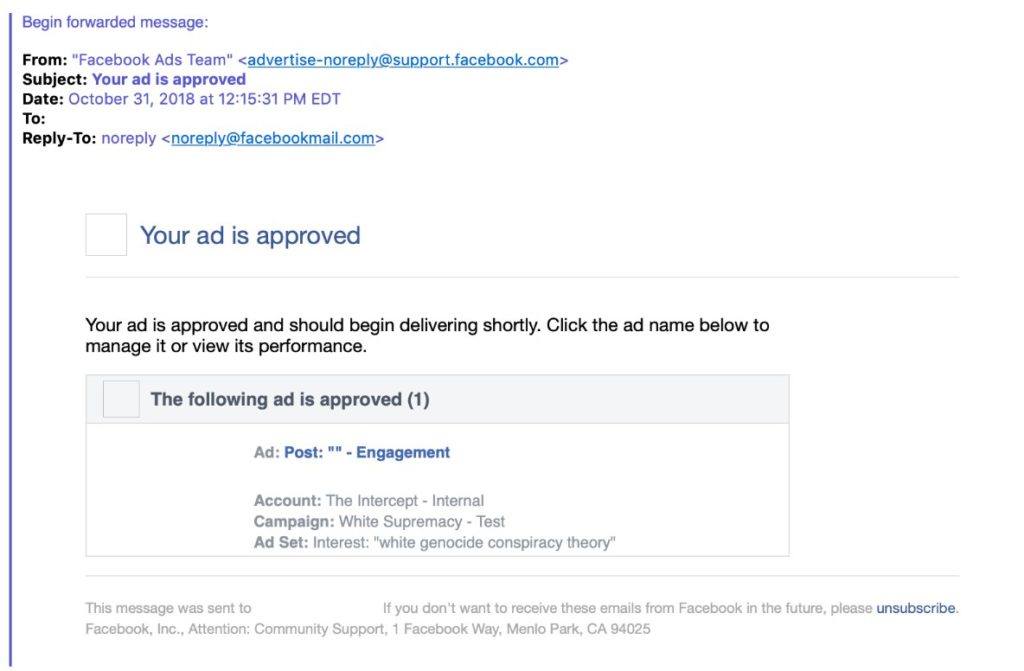

The investigators were able to select that category to promote two stories just after the Pittsburgh massacre, and Facebook’s advertising wing reportedly approved the ad promotion.

What’s more shocking is the fact that the outlet made it really easy to flag its ads by naming it “White Supremacy — Test.” This shouldn’t have been so hard to spot yet such a racist advertisement made it through Facebook’s approval process.

The social media giant couldn’t flag the ad is because “it was based on a category Facebook itself created.”

Even though a mix of AI and human review of suggested categories is used to add on the system, the final decisions on which “new interests” should be added — are made by a human.

The fact that such a category existed on the platform is quite disturbing. Only after the outlet contacted Facebook about the targeting category, the company realized the blunder it had made.

A Facebook spokesperson said that the “targeting option has been removed,” and the ads have been taken down. “It’s against our ads principles and never should have been in our system to begin with. We deeply apologize for this error.”

Also Read: TikTok Beats Facebook, Instagram, YouTube, And Snapchat Downloads In October